It’s the time of year for saving money!

In Part 1 of this new series about binaural sound – a very special recording technique for playback through headphones, only — I started by giving some facts about the sound we hear and how we hear it. Here are some of those plus a few more thrown-in for clarity of explanation:

Every sound we hear is made-up of and perceived from pressure waves in the (usually air, but we can hear under water, too, and through other media [a steel rail, for example] if we touch our ear to it). Those pressure waves are constantly moving at “the speed of sound” and — if they are consistently repeated to comprise a single tone (a single “frequency”) – they are of a length (the “wavelength”) always equal to the speed of sound in the medium they’re passing through (again, usually air) divided by the frequency – the equivalent rate (not number) of repetitions per second – of that tone. In air, the speed of sound depends on its temperature, pressure and humidity but, as a standard, the speed of sound in dry air at sea-level at 68° Fahrenheit [20° C] is usually said to be 1,125 feet per second [343 m/sec].)

Every sound we hear is made-up of and perceived from pressure waves in the (usually air, but we can hear under water, too, and through other media [a steel rail, for example] if we touch our ear to it). Those pressure waves are constantly moving at “the speed of sound” and — if they are consistently repeated to comprise a single tone (a single “frequency”) – they are of a length (the “wavelength”) always equal to the speed of sound in the medium they’re passing through (again, usually air) divided by the frequency – the equivalent rate (not number) of repetitions per second – of that tone. In air, the speed of sound depends on its temperature, pressure and humidity but, as a standard, the speed of sound in dry air at sea-level at 68° Fahrenheit [20° C] is usually said to be 1,125 feet per second [343 m/sec].)

In the course of any one repetition, a single pressure wave (one sinewave of tone) will pass through 360° of “phase”, going from 0° to a positive peak at 90°, back to 0° (180° total phase change), to a negative peak at -90° (270° total) and back to 0° (360° total phase change), with every degree of phase involving travel through the medium equal to 1/360th of the length of the wave at that frequency in that medium. (Distance traveled = Wavelength ÷360 or, in air, 1,125’÷ frequency ÷ 360).

Besides just its amplitude (how loud it is), the sound we hear is very much a matter of timing and of distance: “Timing”, in terms of the frequency (the “often-ness” of the repeatable waveform); the duration (how long one waveform lasts); and the phase (positive or negative, and to what degree [no pun intended, but I’ll take it anyway]) of the pressure waves impinging on our ears: and “distance” in terms of the distance traveled by each pressure wave in getting to them.

As I said last time, 1° of phase at 1 kHz in air equals 0.0375 inches of distance. (calculated as: 1,125′ [343m] ÷ 1000 = 1.125′ [34.29cm] ÷ 360 = 0.0375″ [9.53mm]) and the average distance between the ears on an average human head is (around) 6″ which, at 1kHz, equals (about) 160° of phase (6″ ÷ 0.0375″ = 160 [or] 15.24cm ÷ 0.0953cm = 160) or 0.4444 milliseconds (6″= 0.5′, 0.5’÷ 1125′ per second = 0.0004444 seconds) of time.

Although human hearing has a decidedly non-“flat” frequency response, encompassing only (for a young person in good health) about 20 Hz to 20kHz (while dogs hear up to 40kHz and bats up to 80kHz); and a not particularly impressive (by other animal standards) threshold-to- threshold amplitude response, we do have GREAT time and phase differentiation ability, and those are the things most important in locating objects by their sound; auditorily determining the size and shape of a space; and enjoying the spatial aspects or unraveling the complexities of music – all things that audiophiles love.

Although human hearing has a decidedly non-“flat” frequency response, encompassing only (for a young person in good health) about 20 Hz to 20kHz (while dogs hear up to 40kHz and bats up to 80kHz); and a not particularly impressive (by other animal standards) threshold-to- threshold amplitude response, we do have GREAT time and phase differentiation ability, and those are the things most important in locating objects by their sound; auditorily determining the size and shape of a space; and enjoying the spatial aspects or unraveling the complexities of music – all things that audiophiles love.

Unfortunately, those things that our ears are good at are not what ordinary stereo recording is good at. They are, though, where binaural recording and headphone playback can really shine!

To explain how that works, instead of starting with stereo microphone and speaker placement, as I did last time, let me turn it around and talk, now, about how our ears work and how different that is from the recording and playback technologies we’re used to.

First, though, let’s talk about one very important similarity: When a sound – whether a pulse, a single tone, or the most complex music signal – falls on either an eardrum or a microphone diaphragm (to make it easy, I’m going to call them both “diaphragms”) — it causes (because there is only one diaphragm per ear or microphone) one single movement, which will be either forward or backward depending on the polarity (positive or negative) of the total aggregate pressure (the sum of all of the positive and negative pressures affecting the diaphragm) at that instant. How far the diaphragm will move will depend only on the total net amount of pressure (the net amplitude of all of the pressures comprising the total), and what we hear or what will be recorded in any medium is nothing other than the position of the diaphragm at any given instant – with the number of instants possible to perceive or record being infinite for our ears or an analogue medium and limited by the scan rate in a digital medium.

First, though, let’s talk about one very important similarity: When a sound – whether a pulse, a single tone, or the most complex music signal – falls on either an eardrum or a microphone diaphragm (to make it easy, I’m going to call them both “diaphragms”) — it causes (because there is only one diaphragm per ear or microphone) one single movement, which will be either forward or backward depending on the polarity (positive or negative) of the total aggregate pressure (the sum of all of the positive and negative pressures affecting the diaphragm) at that instant. How far the diaphragm will move will depend only on the total net amount of pressure (the net amplitude of all of the pressures comprising the total), and what we hear or what will be recorded in any medium is nothing other than the position of the diaphragm at any given instant – with the number of instants possible to perceive or record being infinite for our ears or an analogue medium and limited by the scan rate in a digital medium.

Now, consider that, in either our own hearing or the simplest stereo recording (two microphones usually spaced something like eight feet apart), when a sound happens, (just for fun, let’s call it a marching band) both of our ears or both of our microphones will hear all of it, but unless it’s a “one-man band” (one guy playing a bunch of different instruments all by himself at the same time) and unless he’s standing-still, directly in front of us or directly between the two microphones, neither we nor the microphones are going to hear it all at the same time.

]]>And that, the “Time/Distance/Phase Effect” is where stereo falls down and binaural sound reigns supreme. If our marching band – even if it’s standing still – is fifty feet long, from the first row of musicians to the last; our microphones are eight feet apart, centered on the band; and our ears are six inches apart, also centered on the band, both the two mics and our ears will hear all of the whole fifty feet of band — meaning that the two eardrums or the two mic diaphragms will both be moved by the sound of it, with the total net movement of any diaphragm determined by the relative amplitude of all of the sounds and all of their frequencies at all of their distances. (Remember that this is music, so instead of just a single tone, it will be a complex waveform constituted of the algebraic sum of all of the frequencies comprising it, each at a degree of phase determined by the distance of the source of each tone from the hearing or recording diaphragm.) That’s why stereo can never really sound like what we hear; it’s actually “hearing” something different from what we would hear:

With both the ears and the mics exactly centered on the band (and presumably the same distance away from it), and with the mics 7 ½ feet farther apart than the ears [8′-6″=7 ½’] each of the microphones will be 3.75 feet [7.5’÷ 2 = 3.75′] farther to the left or to the right of the center line than the corresponding ear. That means that there will be 3 ¾ feet (1.143m) more distance (and therefore more time and more phase) between the right-end-of-the-band sound heard by the left microphone and the right-end-of-the-band sound heard by the left ear, and that same will hold true for the left-end-of-the-band sound heard by the right mic and the right ear and, for both ears and both mics, for all of the sound originating in-between. In short, the pressure wave components summing algebraically to determine the position of the mic diaphragms and the eardrums at any instant will be different , and therefore can’ t possibly result in the same perceived or recorded sound.

With both the ears and the mics exactly centered on the band (and presumably the same distance away from it), and with the mics 7 ½ feet farther apart than the ears [8′-6″=7 ½’] each of the microphones will be 3.75 feet [7.5’÷ 2 = 3.75′] farther to the left or to the right of the center line than the corresponding ear. That means that there will be 3 ¾ feet (1.143m) more distance (and therefore more time and more phase) between the right-end-of-the-band sound heard by the left microphone and the right-end-of-the-band sound heard by the left ear, and that same will hold true for the left-end-of-the-band sound heard by the right mic and the right ear and, for both ears and both mics, for all of the sound originating in-between. In short, the pressure wave components summing algebraically to determine the position of the mic diaphragms and the eardrums at any instant will be different , and therefore can’ t possibly result in the same perceived or recorded sound.

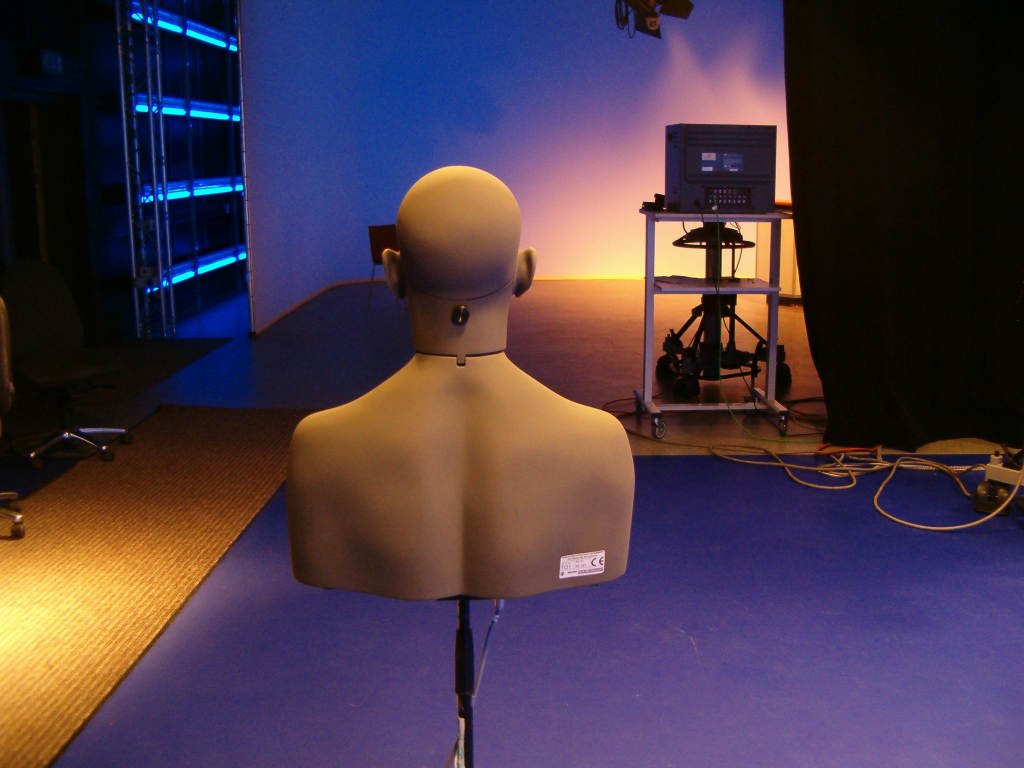

With binaural recording, though, the recording is made with two small microphones mounted in a dummy head, with the mics positioned to, as much as possible, mimic the positioning of the eardrums and the spacing of the ears on either side of a real human head. Also, the head, itself, is positioned just where a listener’s head might be if listening “live” to the same thing, so that binaural, which, unlike stereo, does “hear” just the way we do and is played-back only through headphones, can provide time-perfect and phase-perfect recordings.

With binaural recording, though, the recording is made with two small microphones mounted in a dummy head, with the mics positioned to, as much as possible, mimic the positioning of the eardrums and the spacing of the ears on either side of a real human head. Also, the head, itself, is positioned just where a listener’s head might be if listening “live” to the same thing, so that binaural, which, unlike stereo, does “hear” just the way we do and is played-back only through headphones, can provide time-perfect and phase-perfect recordings.

More next time; see you then!