It’s the time of year for saving money!

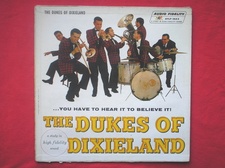

I remember when I first got into stereo, it was with pre-recorded two channel tapes and it was all about right and left; jet planes and locomotives, both steam and diesel, running through the listening room, and we could tell precisely, on a line drawn between our two stereo speakers, where each player of The Dukes of Dixieland was playing, relative to each of the others. That was palpable magic at the time, and I, my Hi-Fi Crazy buddies, and, judging from what happened when stereo came to LP vinyl disc in 1957 – so did everybody else, and stereo, for perhaps even as long as three or four decades thereafter, became a popular hit with both audiophiles and the consumer mainstream market.

At the same time, though, there was something that was in many ways a lot better; that most people at the time had never heard of; and that many, even to this day, have never experienced: binaural sound – sound recorded the way we actually hear it played back through headphones instead of loudspeakers.

At the same time, though, there was something that was in many ways a lot better; that most people at the time had never heard of; and that many, even to this day, have never experienced: binaural sound – sound recorded the way we actually hear it played back through headphones instead of loudspeakers.

There’s a reason why we have two ears, and it isn’t just so that we”ll have a “spare” available if one goes bad. It all has to do with eating: For most non-sessile animals, it’s important to have two ears because doing so allows them to exactly determine the location, movement, and velocity of something that they might want to eat or that might want to eat them.

With two ears and sufficient “processing” capability (brain function, which even the lowest animals have to a surprising degree) it’s possible, by hearing when the sound of something arrives at each ear; at which ear it arrives first; and by how much time the two arrivals differ; to “place” things – in both distance and direction — with an astonishing degree of precision and, thus, to find things to eat or to get warning of, and possibly avoid, things that are trying to eat you.

When we were omnivorous hunter-gatherers, that capability aided us tremendously, and may have been an important factor in our species’ survival to this day. Now, though, we’re mostly free from predators, and the things in grocery stores tend to be silent, giving us little clue of their whereabouts so we can select them. That may be why we were forced, over time, to create locomotives, jet planes, The Dukes of Dixieland, marching bands, music, and stereo systems to put our two ears to use.

If you stop to think about it, most of sound is about time: When technically describing it, two of the main things we talk or write about are amplitude (how LOUD it is) and frequency — for a single tone, how many times a sinusoidal pressure wave (the elements of what we hear as sound) is repeated each second (a purely timing function). Time is one of the absolute basics of sound, and so is phase, which is, itself, a time function, with every sine wave consisting of 360 degrees of phase – each being 1/360th of a wave-duration long. Thus, if the speed of sound in air at sea-level is (at “normal” temperature and pressure) considered to be 1,125 feet per second (343 m/sec) and we’re talking about a 1kHz (1,000 “cycles” per second) sinewave, its wavelength will be 1.125ft (0.343m) (1,125ft÷ 1000 = 1.125 ft) or about 13.5 inches (34.29cm) and each degree of phase (180 degrees positive plus 180 degrees negative) will be 0.0375 inches (9.53mm) long. (13.5″ ÷ 360 = 0.0375″ or 34.29cm ÷ 360 = 0.0953 cm = 9.53mm).

If you stop to think about it, most of sound is about time: When technically describing it, two of the main things we talk or write about are amplitude (how LOUD it is) and frequency — for a single tone, how many times a sinusoidal pressure wave (the elements of what we hear as sound) is repeated each second (a purely timing function). Time is one of the absolute basics of sound, and so is phase, which is, itself, a time function, with every sine wave consisting of 360 degrees of phase – each being 1/360th of a wave-duration long. Thus, if the speed of sound in air at sea-level is (at “normal” temperature and pressure) considered to be 1,125 feet per second (343 m/sec) and we’re talking about a 1kHz (1,000 “cycles” per second) sinewave, its wavelength will be 1.125ft (0.343m) (1,125ft÷ 1000 = 1.125 ft) or about 13.5 inches (34.29cm) and each degree of phase (180 degrees positive plus 180 degrees negative) will be 0.0375 inches (9.53mm) long. (13.5″ ÷ 360 = 0.0375″ or 34.29cm ÷ 360 = 0.0953 cm = 9.53mm).

Where all this becomes important is when you consider that the ear-to-ear distance through the average head is something like 6 inches (15.24cm). For sound at 1kHz, that distance is equal to 160° of phase. (6″÷ 0.0375″ = 160 or 15.24cm ÷ 0.0953cm = 160) which means that if a 1kHz tone were to arrive at your left ear from a source directly facing that ear at (by some fortuitous happenstance) .a point on its waveform corresponding to 0° of phase, by the time it traveled the additional 6 inches or 15.24 cm to get to your right ear, it would be 0.0004444 seconds (0.4444 milliseconds) later (6″= 0.5′, 0.5’÷ 1125′ per second = 0.0004444 seconds)and 160° different in phase. And if, on the other hand, that 1kHz tone came from directly in front of you, instead of even with your left ear and 90° directly to your side, both of your ears would hear it at the same time and in the same phase. That’s how time and phase help us to tell the direction and distance that sounds are coming from (and, of course, to locate the things, instruments, or people that are making them).

It’s also one of the reasons why binaural sound can be better than stereo. The earliest two channel stereo (and what seems to be an increasing number of modern audiophile recordings, too) was recorded with just two broadly-spaced (eight feet apart or thereabouts) omnidirectional microphones placed directly above and/or in front of the performers. With just one mic per channel and with the mics placed (except for height) at about the same distance as the playback speakers would be, each mic became functionally analogous to each of the ears of the ultimate listener, and one would expect that a reasonably accurate stereo presentation could be produced.

It’s also one of the reasons why binaural sound can be better than stereo. The earliest two channel stereo (and what seems to be an increasing number of modern audiophile recordings, too) was recorded with just two broadly-spaced (eight feet apart or thereabouts) omnidirectional microphones placed directly above and/or in front of the performers. With just one mic per channel and with the mics placed (except for height) at about the same distance as the playback speakers would be, each mic became functionally analogous to each of the ears of the ultimate listener, and one would expect that a reasonably accurate stereo presentation could be produced.

The problem with it is that, even with that simple setup, both of the microphones can hear – except as attenuated by distance — all of the sound coming from all of the players, instead of the left mic hearing only the players on the left side and the right mic hearing only the players on the right.

“Well, so what”, you might ask, “Don’t our ears do exactly the same thing?” And you might even add something about the microphones and the speakers playing-back the sound recorded from them both being (roughly) the same distance apart, so the time differences between (for example) the right-side sound heard by the left mic and the right-side sound played-back by the left-side speaker (and vice versa) should be the same for both mics and for both speakers.

“Well, so what”, you might ask, “Don’t our ears do exactly the same thing?” And you might even add something about the microphones and the speakers playing-back the sound recorded from them both being (roughly) the same distance apart, so the time differences between (for example) the right-side sound heard by the left mic and the right-side sound played-back by the left-side speaker (and vice versa) should be the same for both mics and for both speakers.

And that would be exactly right, except that the distance between our ears is only about 6 inches, instead of the 8 feet – 16 times as much – between the two microphones or the two playback speakers, and that means that the distance in time and in phase that will be recorded and played back by those microphones and speakers – the auditory “parallax” (if I may coin a new term) – will be far greater than what we would hear if we were listening to the same live performance with our own ears instead of a pair of microphones. And that will mean that, no matter how good our system is, it can never really get the time and phase right, and sound like live music.

With binaural recording, though, and playback through head phones, it’s easy. I’ll go into it in more detail next time.

See you then!