It’s the time of year for saving money!

When you conduct audio tests with multiple listeners, or read multiple reviewers’ opinions of a single product, one of the biggest challenges is understanding the different ways they describe sound. They’re all hearing the same products, and more often than not they share similar assessments of the product’s performance. But it can take a long time to figure that out, as you try to compare one person’s audio jargon with another person’s audio jargon. You can also run into this problem if you read a lot of audio research papers; each researcher tends to ask the test subjects to use a different set of terms to describe the sound they hear.

A paper titled “Categorization of Sound Attributes for Audio Quality Assessment — A Lexical Study,” appearing the the November 2014 issue of the Journal of the Audio Engineering Society, attempts to make some headway in solving this problem. This isn’t your usual audio research project, in which subjects sit in a lab of some sort doing blind listening tests. In this study, there was no listening at all, just careful consideration from two groups of audio experts in an effort to isolate the most useful terms for describing audio performance.

A paper titled “Categorization of Sound Attributes for Audio Quality Assessment — A Lexical Study,” appearing the the November 2014 issue of the Journal of the Audio Engineering Society, attempts to make some headway in solving this problem. This isn’t your usual audio research project, in which subjects sit in a lab of some sort doing blind listening tests. In this study, there was no listening at all, just careful consideration from two groups of audio experts in an effort to isolate the most useful terms for describing audio performance.

Step 1 was to collect a list of numerous terms used in previous audio studies. These terms were vetted by a group of 12 experts who regularly participate in audio testing. Any term that was considered irrelevant for audio testing by at least six of these experts was thrown out. Then any term that wasn’t cited in at least two of the published audio studies the authors reviewed was also discarded. This process resulted in a list of 28 terms.

Step 2 was to have a test panel, composed of 18 musicians, sound engineers and audio researchers, evaluate these terms by considering each possible pair of terms and rating the similarity of each pair of terms on a 100-point scale.

Step 3 was to allow the 18 panelists to free-associate the terms, categorizing and grouping them as they wished.

Step 4 was to perform statistical analysis on these results to see how they could be grouped together. Grouping the terms together makes it much easier for test subjects to grasp their meaning, and to make accurate and consistent assessments. One of the hardest parts of participating in a controlled audio test with predefined assessment categories is “getting all the blanks filled in” by the time the test material has finished playing; it’s much easier if you can think in just a few general categories rather than in a dozen or more.

I found the end result thought-provoking … and reassuringly simple. The authors ended up with three primary categories: timbre, space and defects.

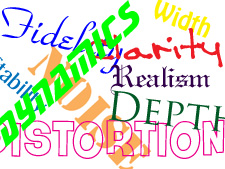

Timbre relates to tone quality, and includes such characteristics as homogenity, fidelity, sharpness, realism, richness, clarity and brightness.

Space refers to the three-dimensional nature of the sound; it includes terms such as width, depth, envelopment, localization and reverberation.

Defects include flaws in the audio, such as distortion, noise and hum.

For all intents and purposes, anyone who’s experienced at evaluating audio products already uses these categories to some extent, although probably not consciously. But having an evaluation so precisely defined may make the results of audio assessments clearer and more useful. Of course, only time will tell if the authors’ work is embraced by the audio researchers and reviewers who evaluate audio products …